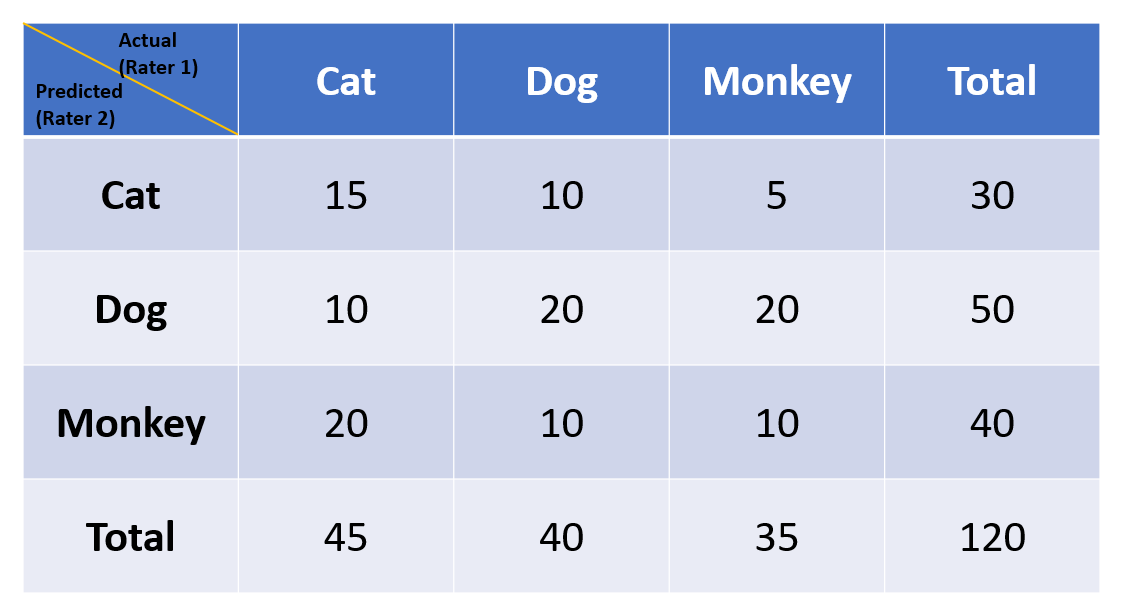

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

Using appropriate Kappa statistic in evaluating inter-rater reliability. Short communication on “Groundwater vulnerability and contamination risk mapping of semi-arid Totko river basin, India using GIS-based DRASTIC model and AHP techniques ...

Graphical representation of the Cohen's Kappa Statistic value for the... | Download Scientific Diagram

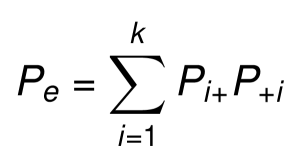

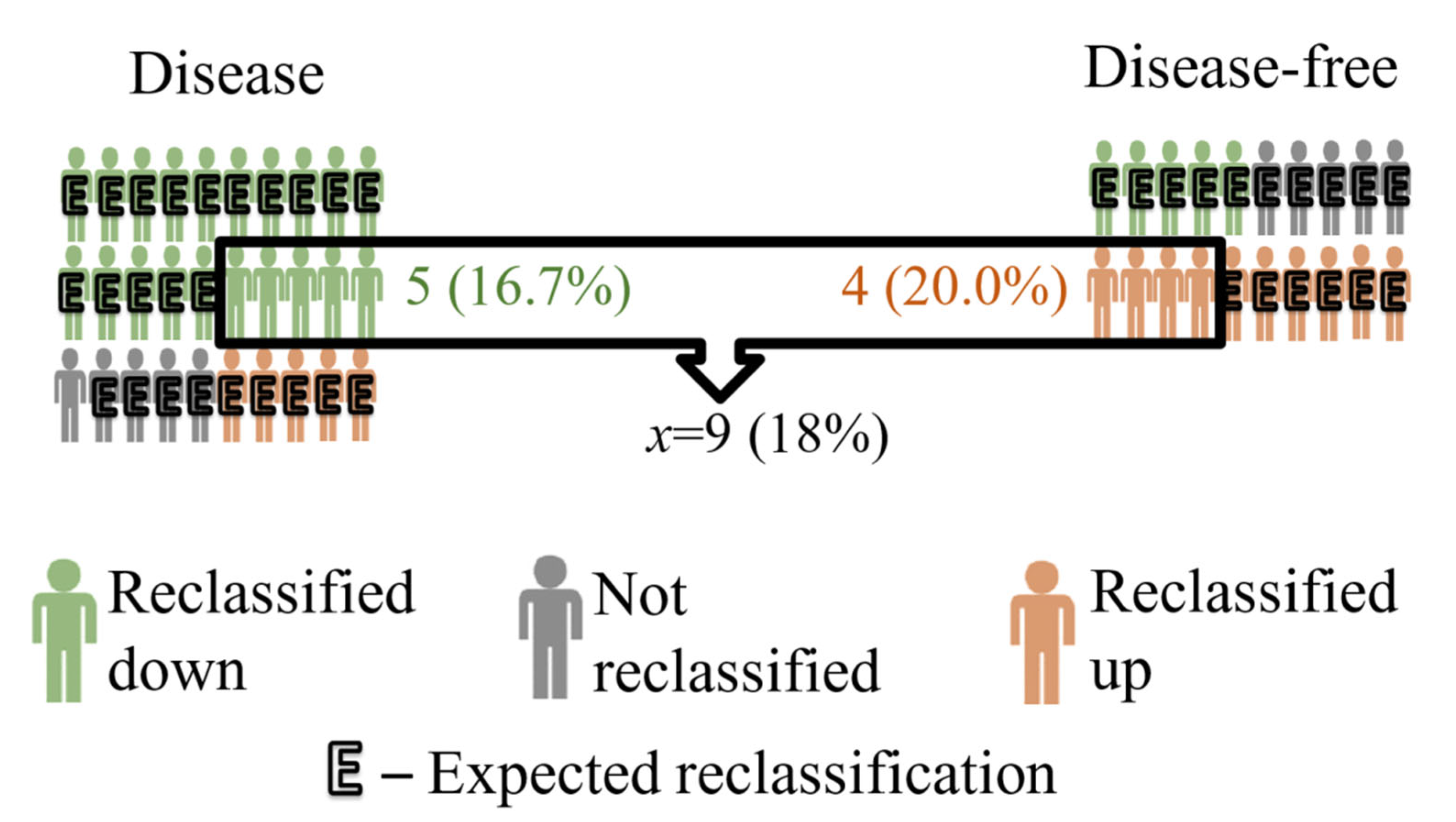

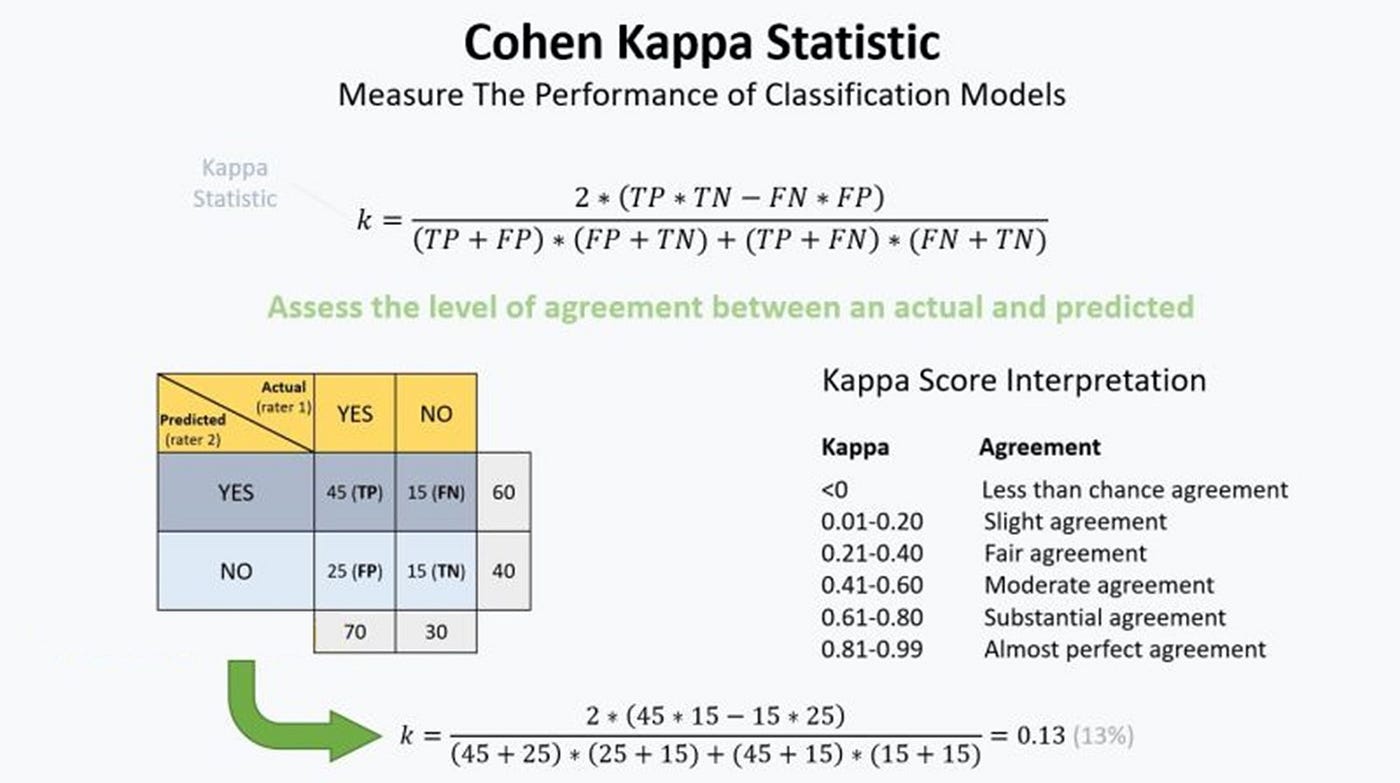

IJERPH | Free Full-Text | Cohen’s Kappa Coefficient as a Measure to Assess Classification Improvement following the Addition of a New Marker to a Regression Model

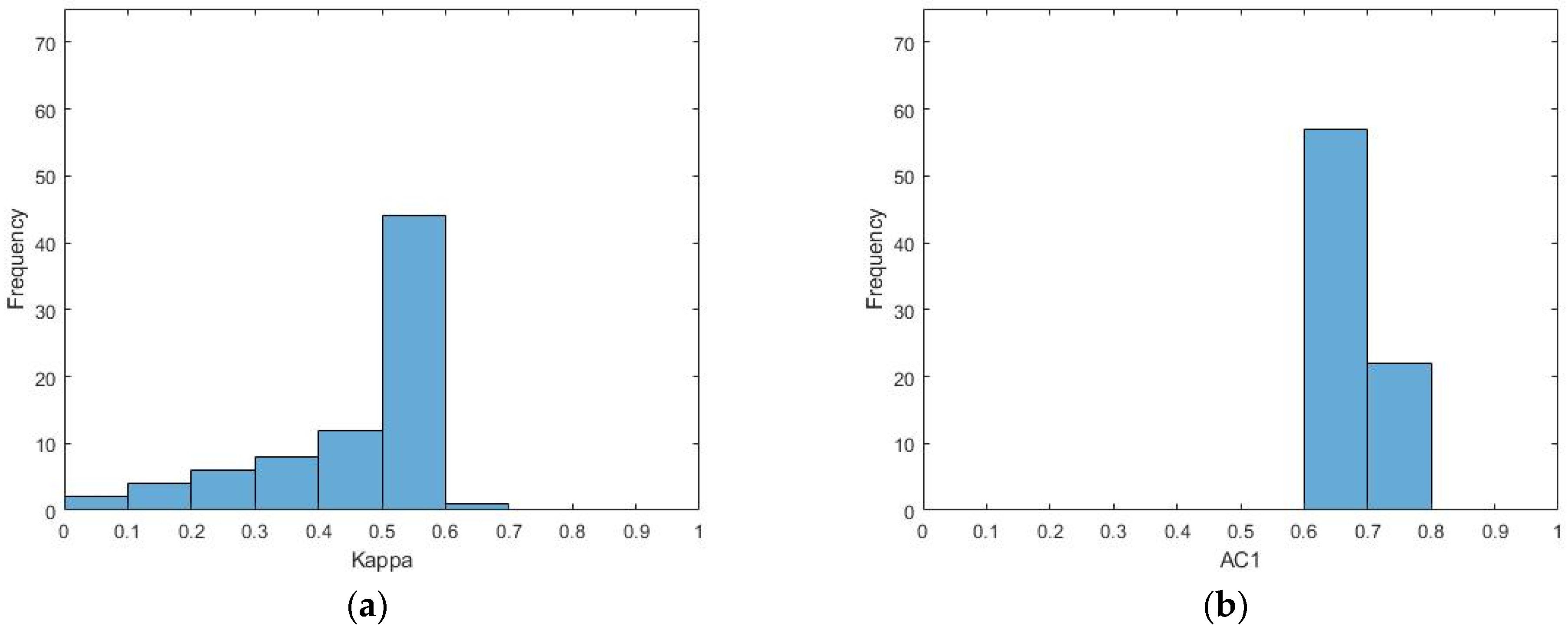

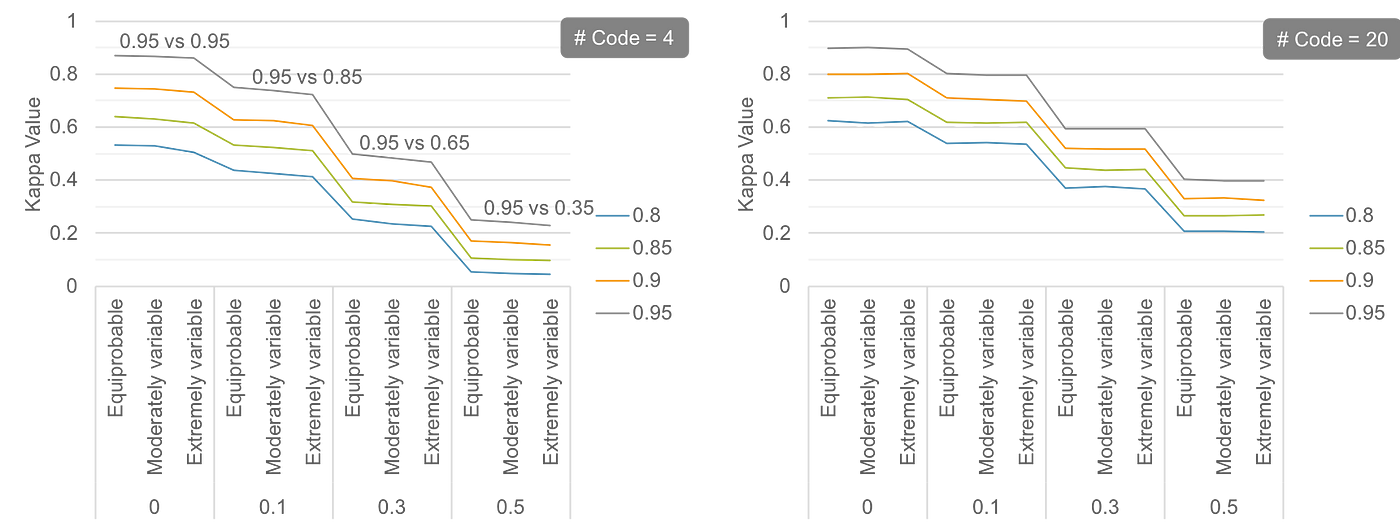

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters

Putting the Kappa Statistic to Use - Nichols - 2010 - The Quality Assurance Journal - Wiley Online Library

GitHub - thomaspingel/cohens-kappa-matlab: This is a simple implementation of Cohen's Kappa statistic, which measures agreement for two judges for values on a nominal scale. See the Wikipedia entry for a quick overview,

![PDF] Sample-size calculations for Cohen's kappa. | Semantic Scholar PDF] Sample-size calculations for Cohen's kappa. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/c59f58e51e97eaa055b450d9f71cac402d7e45ad/3-Table1-1.png)